Introduction

GPT, or Generative Pre-trained Transformer, is a type of artificial intelligence (AI) model that is used for natural languages processing tasks such as language translation, text summarization, and chatbot conversation.GPT models are trained on large amounts of text data and use machine learning algorithms to learn the patterns and structures of human language. They can then be used to generate human-like text or to understand and respond to text inputs.

GPT Models.

GPT models are often used in chatbots to enable natural language conversation with users. For example, a chatbot that uses a GPT model might be able to understand and respond to user inquiries in a way that is similar to how a human would.GPT models are based on the Transformer architecture, which is a type of neural network that is designed for processing sequential data such as text. Transformer models are composed of multiple layers of self-attention and feed-forward neural networks, which allow them to capture long-range dependencies in the input data and generate highly coherent output.

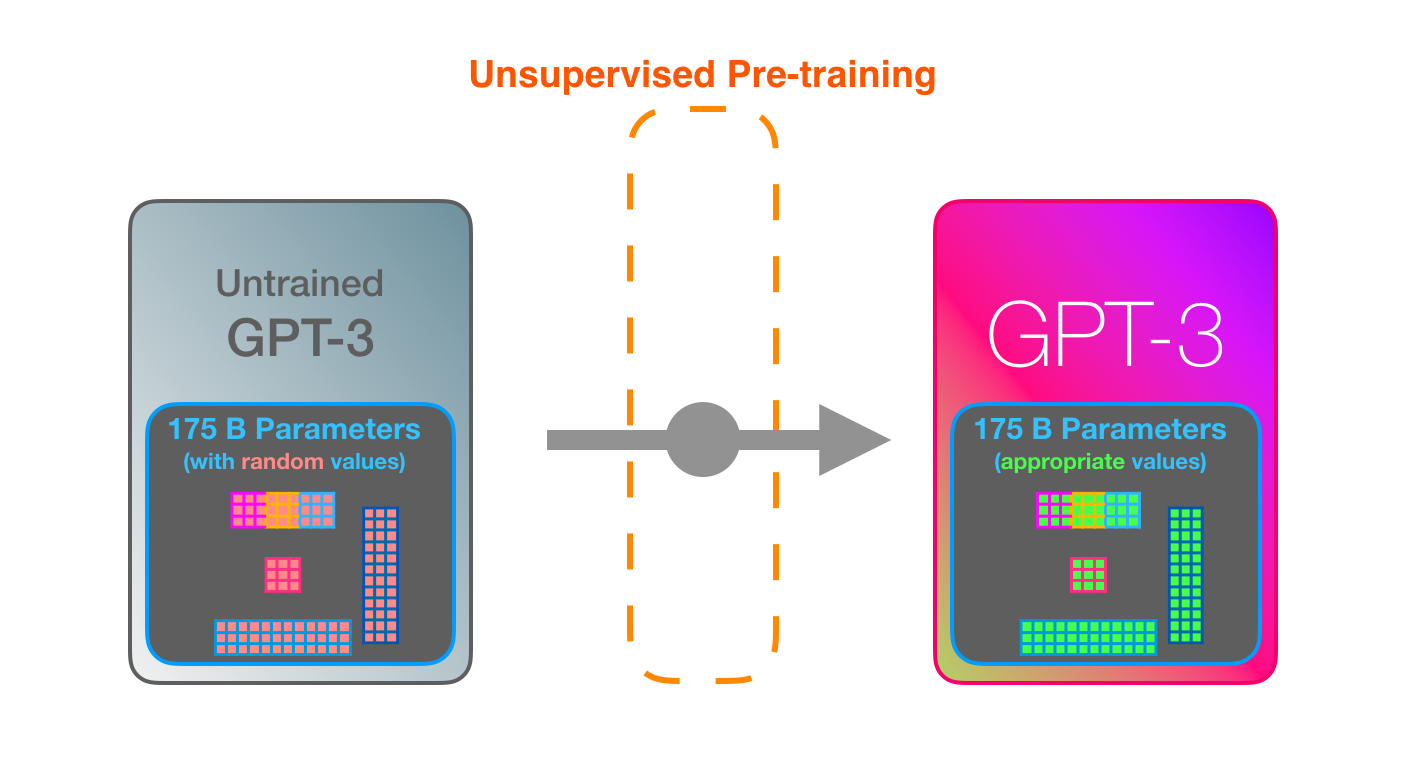

GPT models are pre-trained on large amounts of text data using a technique called unsupervised learning. This means that the model is not given explicit labels or guidance on what to learn, but is instead allowed to learn patterns and structures in the data by itself. Once a GPT model has been pre-trained, it can be fine-tuned on specific tasks such as language translation or chatbot conversation.

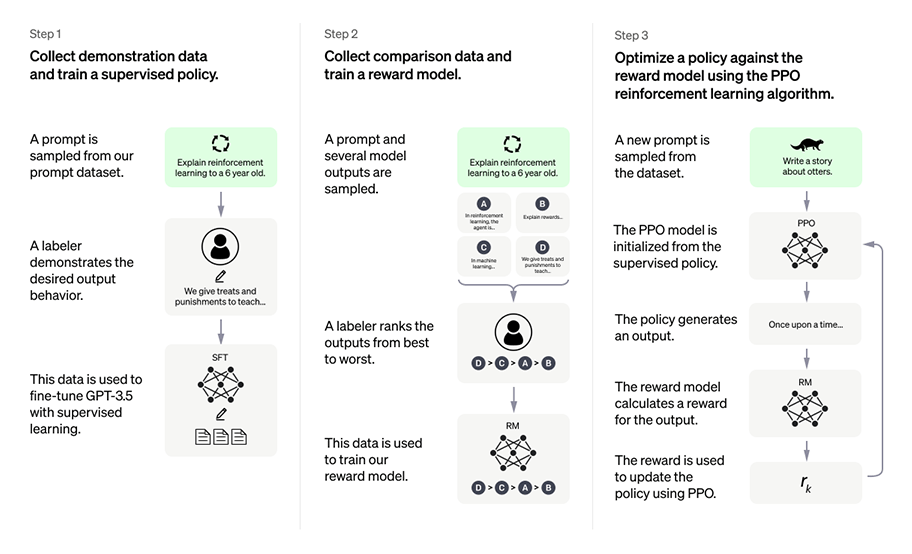

Fine-tuning involves adjusting the model’s weights and biases to better fit the requirements of the specific task.GPT models have achieved impressive results on a variety of natural language processing tasks, and are widely used in applications such as chatbots, language translation, and text generation. There have been several recent upgrades to GPT models, and there are likely to be more in the future as researchers continue to improve the capabilities of these models.

Upgrades.

One recent upgrade to GPT models is the development of GPT-3 (Generative Pre-trained Transformer 3), which is a larger and more powerful version of the original GPT model. GPT-3 has been trained on an even larger dataset than previous GPT models and is capable of generating highly coherent and human-like text. Another recent upgrade to GPT models is the development of GPT-4 (Generative Pre-trained Transformer 4), which is an even larger and more powerful version of GPT-3. GPT-4 has been trained on an even larger dataset and is capable of performing a wider range of tasks, including translation, summarization, and text-to-speech. It is likely that there will be further upgrades to GPT models in the future as researchers continue to improve the capabilities of these models and apply them to a wider range of tasks.

Use In Sports Industry.

Predictive modeling: GPT models can be used to make predictions about the outcomes of sports events or other types of bets based on past data. For example, a GPT model might be trained on data about past games and players and then used to predict the outcome of future games.

Text generation: GPT models can be used to generate human-like text, which can be useful for tasks such as creating betting analyses or tips.

Text summarization: GPT models can be used to generate summaries of longer texts, which can be helpful for quickly getting an overview of betting information or strategies.

Chatbots: GPT models can be used to enable natural language conversation with users in chatbot applications, which can be helpful for providing information about betting options or odds.

more action www.SportsGhoda.com